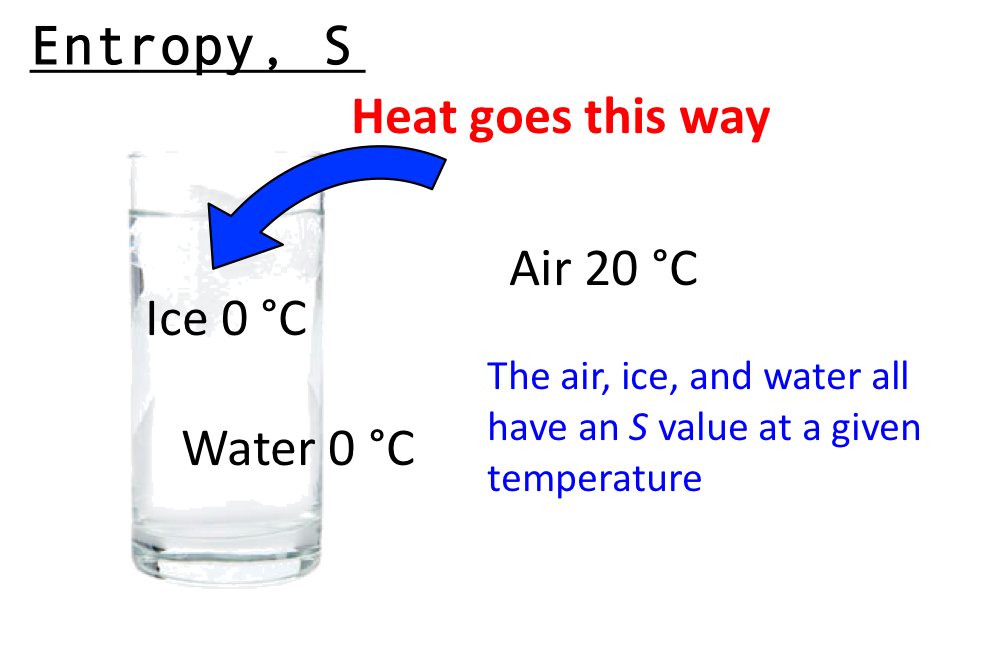

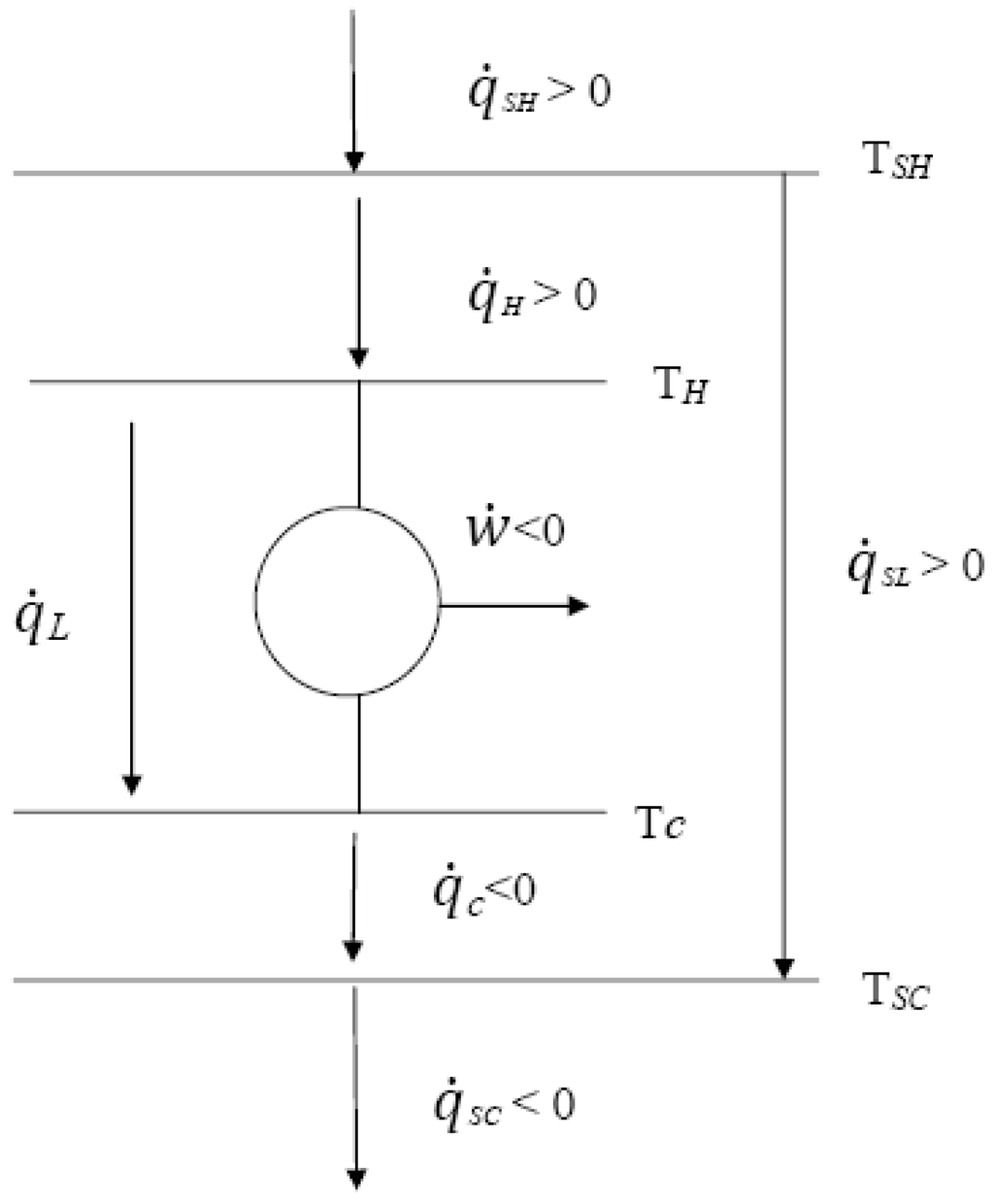

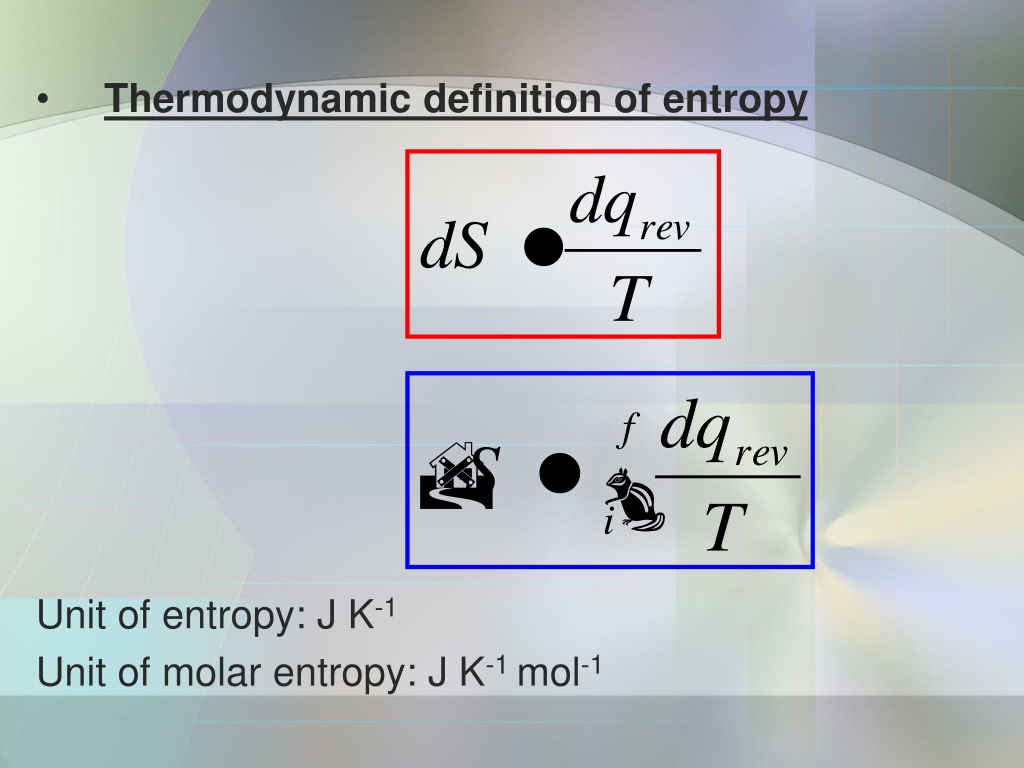

We show that by investigating the feature entropy of units on only training data, it could give discrimination between networks with different generalization ability from the view of the effectiveness of feature representations. Further, we show that feature entropy decreases as the layer goes deeper and shares almost simultaneous trend with loss during training. In this way, feature entropy could provide an accurate indication of status for units in different networks with diverse situations like weight-rescaling operation. Unit status is indicated via the calculation of a defined topological-based entropy, called feature entropy, which measures the degree of chaos of the global spatial pattern hidden in the unit for a category. To this end, we propose a novel method for quantitatively clarifying the status of single unit in CNN using algebraic topological tools. However, it is still challenging to reliably give a general indication of unit status, especially for units in different network models. We can see how those high-quality sources of energy that we still have are extremely valuable, and it's critical to protect them.Abstract: Identifying the status of individual network units is critical for understanding the mechanism of convolutional neural networks (CNNs). The units of of energy over temperature (e.g. We can see this as the initial electrical energy from the batteries degrading into heat, which we won't be able to use or reverse into electrical energy.įrom the above, you can see that the statement: "the entropy of the universe is constantly increasing" indicates that the universe's energy is gradually degrading from high to low quality.Īt some point, the total amount of energy will be the same, but unable to generate work. This online converter, converts the specific unit of entropy to. However, when calculating the entropy generation, this will be positive. Entropy exists to ensure energy conservation and prevent the abuse and misuse of energy. If we apply the first law to this system, we'll see that the energy is conserved. The number of available microstates increases when matter becomes more dispersed, such as when a liquid changes into a gas or when a gas is expanded at constant temperature. When you turn on the lamp, you'll notice the bulb is heating up, and this heat is lost to the surroundings. According to the Boltzmann equation, entropy is a measure of the number of microstates available to a system.

The clumped energy in the batteries is ready to be used at any time to turn on the light bulb. On the other hand, we consider heat at low temperatures to be a low-quality form of energy, as this can produce little to no work.Īs a thought exercise, we can think of a battery-operated lamp.

Which are forms of high-quality energy? Those that are readily available to be used, as is the case of chemical energy stored in a battery, electrical energy, mechanical energy, and some fossil fuels. This implies that we can distinguish between "high-quality" and "low-quality" forms of energy. Entropy is dynamic - the energy of the system is constantly being redistributed among the possible distributions as a result of molecular collisions - and this is implicit in the dimensions of entropy being energy and reciprocal temperature, with units of J K-1, whereas the degree of disorder is a dimensionless number. In order to get a more intuitive idea of what entropy represents, it can be helpful to see it as a measure of the quality of the energy.

In contrast to energy, entropy can feel like an abstract concept, not so simple to grasp. Up to this point, we've gone through entropy's definition, formula, and applications, but if you're still unsure what it represents, you're not alone.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed